Why PassiveDataKit? Part I: A History

For the past decade, I have been building systems that use automated means to gather data about end-users by leveraging the growing amount of sensors and other options to gather passive data available on modern computers, smartphones, wearables, and devices in the environment. In this post, I describe my personal history with this kind of technology and how that history is leading up to Audacious Software's most important project: PassiveDataKit.

My original forays into passive data collection date back to my senior year of college at Princeton's Computer Science department. Since I pursued my CS degree from the liberal arts angle (as opposed to the engineering angle), I traded in a larger course load for more project-based work, cumulating with a final senior thesis project. Advised by Dr. Brian Kernighan (the "K" in K&R C, and the "K" in AWK), I spent more than a year working on a project building a distributed system for gathering and making sense of geographic data.

While the focus of my senior project was shared both with GIS data existing in databases as well as data gathered in the field, my earliest passive data collection work included rudimentary active geotrackers (built from Compaq's PocketPC, a mobile phone with basic WAP capabilities, and a hardware GPS receiver) as well as programs that could communicated with Garmin GPS receivers to build maps offline. I did quite well on the project, but never leveraged it beyond a student project in my early career outside the University. Part of that issue was that I was not employed in a capacity to continue to develop that technology stack, and the other part was that similar efforts were advancing in the private sector than a newly-graduated software developer could keep up with. (Google Earth implements most of the original vision I was chasing in college.)

I worked as an academic software developer at Northwestern University building tools primarily for teaching and learning within the University. I left that position in 2006 to move "up campus" to the School of Communication to pursue a Ph.D. in the Media, Technology, and Society program (a wonderful program that I recommend highly). As an MTS student, I worked on a variety of projects, but my budding research interests began with improving the state of notification systems, which led me to context-aware systems (under the advisement of Dr. Darren Gergle).

During my Ph.D. time, my largest project was a Mac application called Pennyworth. The application, named in honor of Batman's butler, used all the available mechanisms I could employ on a Mac computer to gather passive data about its user so that I could train machine learners (C4.5 decision trees) to recognize the user's semantic location, activity, and social context. The application and accompanying documentation is still available on the original Pennyworth website.

The attributes of the user that Pennyworth was attempting to learn were not intended for simple informative purposes – I built a suite of tools that were consumers of the context information. An app called Pennyworth Punch Clock was a context-aware time tracker that would attempt to leverage Pennyworth's activity broadcasts to generate an accurate time report for its user. An app called Cidney used location and social context to manage notifications for incoming phone calls from third parties on traditional landlines. The Shion home automation system grew out of a desire to use activity inferences to optimize the local environment to support the current activity. (e.g. Brighter lights for active tasks such as writing, a softer atmosphere for recreational activities such as playing games or watching movies.) In addition to standalone apps that consumed context predictions, Pennyworth also featured a robust scripting environment that executed AppleScript tasks such as shutting down music sharing when on third-party networks and configuring other apps on a per-context basis.

Recognizing that focusing on a single platform would hobble any wider adoption desires I had for Pennyworth, I completed the initial port of that system to the Windows desktop platform called Jarvis (a name now well-known from the "Iron Man" movies). While I was writing Jarvis, I was also transitioning from a Ph.D. student into an entrepreneur late in 2008, working under the theory that there was value in these context-sensing systems – someone just needed to build the systems to illustrate that value.

In the first incarnation of Audacious Software, I worked with David Mohr and Mark Begale to translate some of the context-sensing work I had done to a mobile context. In 2009, the iPhone's background features were non-existent and the Android ecosystem consisted solely of an odd device made by HTC that only T-Mobile was selling. Nokia was the dominant smartphone platform at the time, and thus Mobilyze was born.

Written for the Symbian operating system, Mobilyze consisted of a user-facing app that could be scripted with a variant of JavaScript to prompt users to answer questions at particular times. It was paired with another app that managed collecting data from sensors on the phone and transmitting that information via a custom XMPP-based protocol to a remote data collection server for analysis and model generation. (The battery life was predictably terrible, but the system did work.)

In addition to my work on Mobilyze, I was also developing Shion from a small toy-app into full home-automation application and service. The original intent was to give away the Mac app that managed the home control and to sell an online service that would allow users to pay a monthly subscription to use mobile apps to monitor and control their environment. After a couple years of development, I pivoted from that idea when I joined Power2Switch as their CTO for a summer. My angle with Shion and Power2Switch was that it might be possible to use the monitoring capabilities of Shion to collect passive data about the state of various devices to begin to build smart systems that would allow folks paying heating and electricity bills the ability to automatically generate budgets that would lower energy consumption. Given that Power2Switch was unable to transition from a business model where their revenues were a commission on electricity usage, this technical vision didn't go very far in that startup context, and I went ahead and open-sourced the entire technology stack. After I left Power2Switch's CTO role, I resumed consulting.

In addition to these projects, I also did two notable passive data projects for two researcher clients. In 2010, I built an HTTP proxy for Ericka Menchen-Trevino that collected the web-usage of a cohort of research subjects during the 2010 election season in Chicago. The passive data we gathered proved valuable in supporting more active techniques, such as interviews and ethnography, to create a more nuanced and complete picture of how web-users' online media consumption habits influence their political outlook and behaviors.

Another researcher, Yuli Patrick Hseih, was interested in learning more about how survey respondents behaved while completing online questionnaires. We created a system called EgoGalaxy that was an online survey builder that also included a rich event-generation framework to capture behaviors such as changing answers to questions, skimming questions too quickly, and skipping around surveys. Instead of a simple output that reflected the survey users' final responses to questions, EgoGalaxy generated a stream of data that would allow researchers to reconstruct the respondents' activity throughout the survey. I would reuse this event-driven approach in the IntelliCare project.

After fighting a losing battle with work-life balance, in the fall of 2012, I ceased my consulting activities and joined the Center for Behavioral Intervention Technologies (CBITs) as a software developer. CBITs was a relatively new research center at Northwestern University's Feinberg School of Medicine headed by David Mohr (on the research side) and Mark Begale (on the technology side). CBITs was focusing on web- and mobile-based mental health tools that were iterations of projects like Mobilyze.

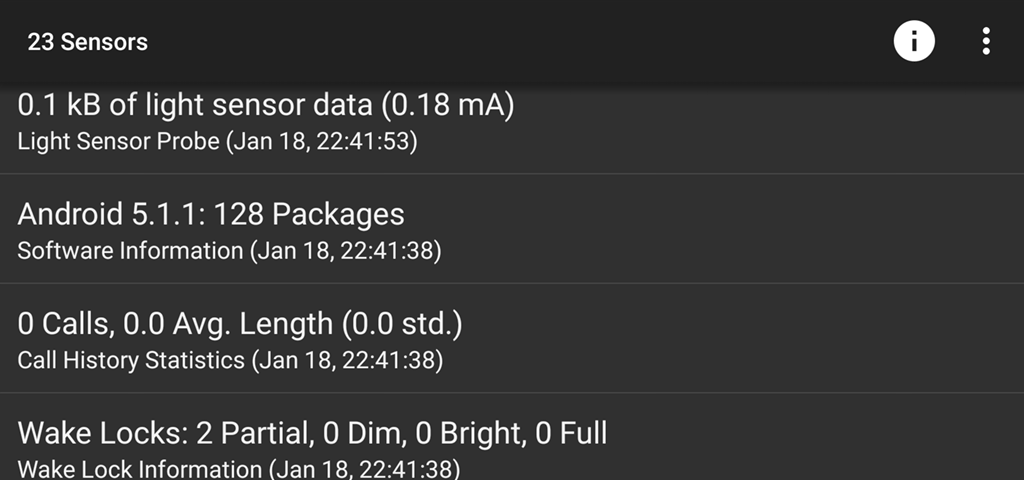

At CBITs, I had two main projects: Purple Robot and IntelliCare. Purple Robot was an evolution of the original Mobilyze architecture, this time for Android devices. It started as a way to gather and transmit sensor data from a mobile phone, but grew to also include a robust scripting framework that allowed other apps built using web-inspired platforms such as Cordova/PhoneGap access to advanced system features. These included scheduled job execution, background data transmission, and notification access to native dialogs, home-screen widgets, and the system tray. In addition to gathering sensor and app data from the local device, Purple Robot also included interfaces that allowed it to communicate with third-party network services such as Facebook, Foursquare, Fitbit, and more. Purple Robot transmitted its data to an HTTP service for analysis and processing. (This remote service was originally written in Node.js by Evan Story, and later translated to Django by myself.)

My other major CBITs project was IntelliCare. IntelliCare is a suite of 13 small native apps (written by myself and several other developers and clinical collaborators) that each attempt to address one aspect of depression and anxiety. The apps are designed to be paired with a recommendation engine so that different engagement patterns would allow the recommender to identify clusters of engagement patterns between apps that would allow it to suggest apps that would be more useful to an individual user. To capture the fine-grained engagement data that the recommendation engine would need, I recycled the event-driven approach originally employed in Hseih's EgoGalaxy to generate a stream of events from thousands of end-users around the world. (This work is in-progress and I'll be happy to report more as its ultimate effectiveness is measured.)

This leads us to the present. Last November, I conveyed my intent to resign from my position at CBITs to resume my existence as a consultant/entrepreneur focusing on creating infrastructure and opportunities for products that generate value through ethical passive data collection and application. I'm calling this project PassiveDataKit and in my next post, I'll describe how it unifies two themes in my career over the past decade – event-driven engagement capture and sensor-based context-awareness – into a single unified framework designed for the next decade.